7 Powerful Insights About Multimodal AI: How It Is Transforming the Future of Artificial Intelligence

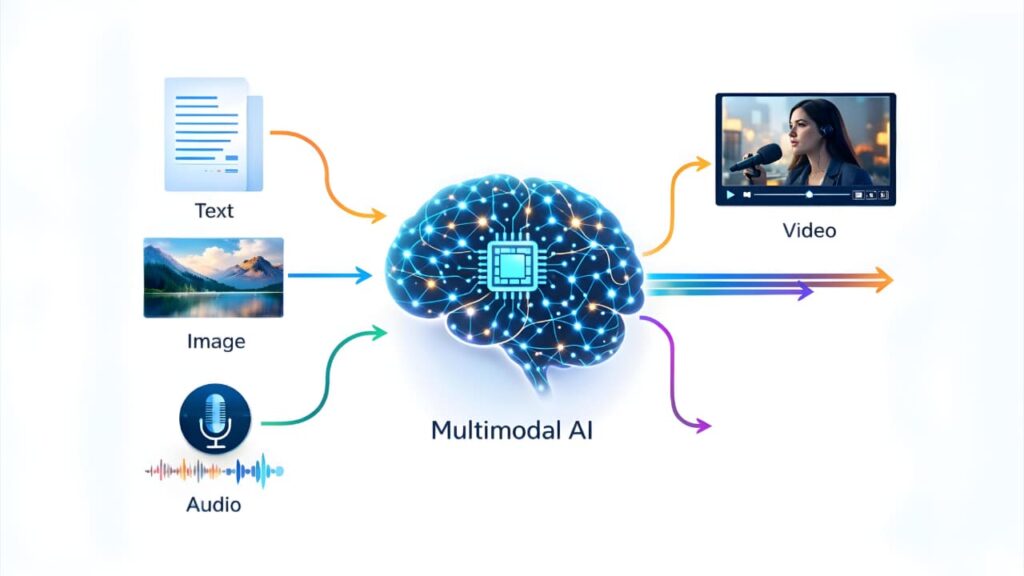

Artificial Intelligence is evolving faster than ever. In recent years, one of the most exciting developments in this field is This AI Technology. Unlike traditional AI systems that process only one type of data, multimodal AI can understand and combine multiple forms of information such as text, images, audio, and video.

This advancement is changing how machines interact with the world and how humans interact with technology. From smarter virtual assistants to advanced healthcare systems, multimodal AI is opening doors to a new era of intelligent systems.

In this article, you will learn what multimodal AI is, how it works, its benefits, real-world applications, challenges, and why experts believe it will shape the future of artificial intelligence.

What Is Multimodal AI?

Multimodal AI refers to artificial intelligence systems that can process and understand multiple types of data inputs simultaneously. These inputs may include text, images, speech, video, and even sensor data.

Traditional AI models usually focus on one type of data. For example, a language model understands text, while a computer vision model analyzes images. Multimodal AI combines these abilities into a single system that can interpret information in a more human-like way.

Humans naturally process information using multiple senses. When you watch a movie, you understand the story through visuals, dialogue, music, and emotions. Multimodal AI tries to replicate this type of understanding.

Because of this capability, multimodal systems can generate richer insights and provide more accurate responses compared to single-modal AI models.

How Multimodal AI Works

AI systems work by combining multiple machine learning models that specialize in different data types. These systems integrate data through advanced neural network architectures.

The process generally involves three main stages:

1. Data Collection

AI systems collect data from different sources such as:

Text documents

Images

Videos

Audio recordings

Sensor data

Each data type provides unique information that helps the AI understand context better.

2. Data Processing

Each modality is processed by specialized AI models. For example:

Natural language processing models analyze text.

Computer vision models analyze images and videos.

Speech recognition models process audio.

These models convert the input into numerical representations that the AI system can analyze.

3. Data Fusion

Once the data is processed, the system combines the information from different sources. This step is called multimodal fusion.

Through this fusion process, the AI system learns relationships between different data types. For example, it can connect an image with a caption or match speech with facial expressions.

The final result is a system that can understand complex situations more effectively.

Key Benefits of Multimodal AI

This AI technology provides several advantages that make it one of the most promising technologies in artificial intelligence.

1. Better Context Understanding

By analyzing multiple types of data, multimodal AI gains a deeper understanding of context. This improves accuracy and reduces misunderstandings.

2. More Natural Human Interaction

Humans communicate using voice, text, gestures, and visual cues. Multimodal AI enables machines to interact with humans in a more natural and intuitive way.

3. Improved Decision Making

Combining multiple data sources allows AI systems to make more informed decisions. This is especially useful in industries like healthcare, finance, and security.

4. Enhanced User Experience

Applications powered by multimodal AI can provide more personalized and intelligent experiences for users.

Real-World Applications of Multimodal AI

This AI technology is already being used across many industries. As the technology continues to improve, its applications will become even more widespread.

Healthcare

In healthcare, multimodal AI can analyze medical images, patient records, and lab results together. This helps doctors make more accurate diagnoses and treatment decisions.

For example, an AI system may analyze an X-ray image while also reviewing patient symptoms and medical history.

Autonomous Vehicles

Self-driving cars rely on multimodal AI to interpret data from cameras, radar, GPS, and sensors. By combining these data sources, the vehicle can better understand its surroundings.

This allows autonomous vehicles to detect obstacles, recognize traffic signals, and navigate safely.

Virtual Assistants

Modern virtual assistants are becoming increasingly multimodal. They can process voice commands, understand text messages, and even analyze images.

This capability allows them to provide more useful and personalized responses.

E-Commerce

This AI technology is transforming online shopping. Customers can search for products using images instead of text.

For example, someone can upload a photo of a product and instantly find similar items in an online store.

Content Creation

AI systems can now generate text, images, audio, and video together. This opens new possibilities for digital marketing, entertainment, and media production.

This AI technology tools help creators produce content faster and more efficiently.

Multimodal AI vs Traditional AI

Understanding the difference between multimodal AI and traditional AI is important.

Traditional AI systems focus on one type of data input. For example:

Text-only AI systems process written information.

Image recognition systems analyze visual content.

Speech recognition systems process audio.

Multimodal AI combines these abilities into a unified system.

This allows AI to interpret complex real-world situations where multiple data types are involved.

As a result, multimodal systems are more flexible, powerful, and capable of solving complex problems.

Challenges of Multimodal AI

Despite its potential, This AI technology still faces several challenges.

Data Complexity

Handling multiple data types requires massive amounts of data and computing power. Training multimodal models can be expensive and time-consuming.

Data Alignment

Different types of data must be aligned correctly for the AI system to understand relationships between them. This process can be technically difficult.

Bias and Ethical Concerns

Like other AI systems, multimodal models can inherit biases from training data. Ensuring fairness and transparency remains a major challenge.

Privacy Issues

Multimodal systems often rely on sensitive data such as images, voice recordings, and personal information. Protecting user privacy is essential.

The Future of Multimodal AI

Experts believe multimodal AI will play a major role in the next generation of intelligent systems.

In the future, AI systems may be able to understand the world almost as humans do by combining visual, auditory, and textual information.

Some possible future developments include:

Advanced personal AI assistants

More intelligent robots

Improved medical diagnostics

Smarter education technologies

Highly immersive virtual reality experiences

As research continues, This AI technology will likely become a foundation for more powerful artificial intelligence systems.

The future of artificial intelligence will rely heavily on systems that can understand multiple types of information at once.

If you want to learn about the next generation of autonomous AI systems, you can read our detailed guide on Agentic AI and how autonomous AI agents work.

Why Multimodal AI Matters

This AI technology represents a major step forward in the evolution of artificial intelligence. By enabling machines to understand multiple forms of information simultaneously, this technology brings AI closer to human-level perception.

Organizations across industries are already exploring ways to integrate multimodal capabilities into their systems.

Businesses that adopt this technology early may gain significant competitive advantages in the years ahead.

Conclusion

This AI technology is transforming the way artificial intelligence systems understand and interact with the world. By combining text, images, audio, and other data types, these systems can interpret complex information more effectively than traditional AI models.

From healthcare and autonomous vehicles to e-commerce and content creation, multimodal AI is driving innovation across many industries.

Although challenges remain, the future of this technology looks extremely promising. As research and development continue, multimodal AI will likely become a core component of the next generation of intelligent systems.

Learn more about multimodal AI research here:

https://ai.googleblog.com

FAQs

What is multimodal AI in simple terms?

This AI technology is a type of artificial intelligence that can process and understand multiple types of data such as text, images, audio, and video at the same time.

Why is multimodal AI important?

This AI technology allows machines to understand context more accurately by combining different types of information. This leads to better decision-making and more natural interactions with humans.

Where is multimodal AI used?

This AI technology is used in healthcare, autonomous vehicles, virtual assistants, e-commerce, content creation, and many other industries.

Is multimodal AI the future of artificial intelligence?

Many experts believe multimodal AI represents the next major step in AI development because it allows machines to interpret information in a more human-like way.

What are the challenges of multimodal AI?

The main challenges include data complexity, high computing requirements, ethical concerns, and privacy issues.